UNIST site map

- Admissions

-

Academics

- Colleges and Schools

-

Academic Affairs

- Academic Calendar

- Academic Curriculum

- Requirements for Graduation

- Browse Open Courses

- Undergraduate Administration

-

Graduate Academic Affairs

- Tuition Fee Payment

- Academic Leave of Absence/ Academic Return

- Voluntary Withdrawal/ Expulsion

- Change of Major

- Change of Degree Program/ Dropping of Degree Program

- Class Period/ Attendance Period/ Academic Year・Semester

- Course Registration

- Course Drop

- Attendance/ Grade/ Exam

- Credit Transfer/ Credit Carryover

- Academic Forms

- Education Support

-

Research/Industry

- Research Aims

- Research Findings

- Researcher Search

-

Research Organizations

- UNIST Multi-Interdisciplinary Institute

- IBS Research Groups

-

UNIST Labs

- Department of Mechanical Engineering

- School of Energy and Chemical Engineering

- Department of Civil

- Department of Materials Science and Engineering

- Department of Nuclear Engineering

- Department of Industrial Engineering

- Department of Design

- Department of Biomedical Engineering

- Department of Biological Sciences

- Department of Electrical Engineering

- Department of Computer Science and Engineering

- Department of Mathematical Sciences

- Department of Chemistry

- Department of Physics

- School of Business Administration

- Graduate School of Carbon Neutrality

- Graduate School of Artificial Intelligence

- Research Support

- University-Industry Relations

- Campus Life

- News Center

- About UNIST

-

etc

- UNIST Bulletin

- Work-Life Balance Support System

- UNIST Gender Equality Plan

- Faculty Invitation for Tenure Track

- Faculty Invitation for Non-Tenure Track

- Board Meeting Minutes

- University Council Meeting Minutes

- Administrative Service Charter

- Privacy Policy

- Copyright Policy

- Rejection of Unauthorized Email Collection

- Operation and Management Policy for Video Information Processing Devices

- Information Disclosure

Connection Points of Knowledge, Everything About UNIST

Try searching.

Recommended search terms

- portal

- U Academics Innovation Center

- Leadership Center

- Dormitory

- Academic Information Center

- International Students Support

- Browse Open Courses

- Course Registration

- Graduation Requirements for Graduation

- Academic Leave of Absence/ Academic Return

- Military Service

- Certificate Issuance

- Academic Calendar

- Scholarships

- Campus Map

- Campus Life Guidebook

- Health Care Center

- Human Rights Center

- portal

- Job Opening

- Announcement for Bid

- UNIST AI Services

- UNIST Daycare Center

- Sports Center

- UI Downloads

- Announcement

- Recruitment of Professors (Non-tenure)

- Faculty Invitation for Tenure Track

- UNIST Academic Information Center

- Office of Research Facilities and Training

- Office of Research Affairs

- Rule Management System

- Academic Calendar

NEWS CENTER

Discover not only Research Findings and event news, but also the diverse facets of UNIST presented by reporters and writers.

News Center

UNIST News

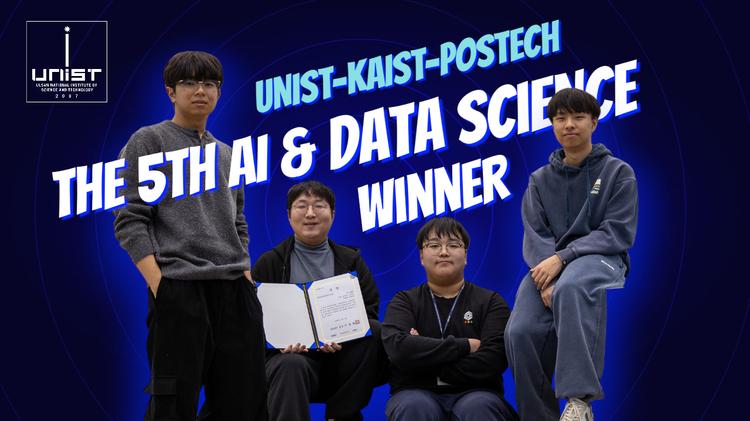

UNIST Secured Second Place at IEEE SaTML 2026

- Community

- JooHyeon Heo

- 2026.03.27

- 912

Researchers at UNIST has earned international recognition for developing a method to detect and mitigate hidden malicious triggers in artificial intelligence systems—an emerging threat to the reliability of large language models (LLMs).

Led by Professor Saerom Park of the Department of Industrial Engineering and the Graduate School of Artificial Intelligence, and Professor Sung Whan Yoon of the Graduate School of Artificial Intelligence and the Department of Electrical Engineering, the team placed second in the Anti-Backdoor (Anti-BAD) Challenge at the IEEE Conference on Secure and Trustworthy Machine Learning (SaTML 2026), held in Munich, Germany, from March 23 to 25, 2026.

Backdoor attacks embed hidden signals into AI models during training, causing them to produce unintended outputs when specific inputs—known as triggers—are encountered. Because these models otherwise perform as expected, these vulnerabilities present a persistent challenge for the safe deployment of AI systems.

The competition challenged participants to develop methods, capable of reducing the impact of such triggers across a range of applications, including text generation, classification, and multilingual tasks. In response, the UNIST team proposed a unified framework designed to operate effectively across these varied settings.

Their approach integrates model quantization, model merging, outlier parameter detection, and confidence calibration. Together, these techniques enable the identification and suppression of anomalous behaviors while preserving overall model performance. The methods does not rely on prior knowledge of attack patterns, making it applicable to a broad class of models and use cases.

Contributors to the study include JiEun Yun and KiWan Kwon of the Department of Industrial Engineering, and SeungBum Ha of the Graduate School of Artificial Intelligence.

"Even in the absence of prior information about the attack methods, it is possible to meaningfully reduce backdoor risks in large language models,” said JiEun Yun. "This work is a step toward ensuring that AI systems can be deployed with greater confidence."

The IEEE SaTML is a leading international forum dedicated to advancing research on the security, robustness, and trustworthiness of machine learning systems. The annual competition serves as a benchmark for emerging approaches to safeguarding next-generation AI technologies.

![[사진] UNIST 박새롬 교수.jpeg](/app/board/attach/image/560829_1775451554000.do)

![[사진] UNIST 윤성환 교수.png](/app/board/attach/image/560830_1775451554000.do)

![[사진] 왼쪽부터 UNIST 하승범·윤지은·권기완 연구원 (2).jpeg](/app/board/attach/image/560831_1775451555000.do)

![[사진] 왼쪽부터 UNIST 하승범·윤지은·권기완 연구원 (1).jpeg](/app/board/attach/image/563452_1775458881000.do)